|

6/23/2023 0 Comments Sqlite file

You are right, because on today's computer (laptops & desktops, not supercomputers or datacenter servers), a hundred gigabyte is still a quite large disk space. BTW, that practical limit also impacts other SQL RDBMS like PostGreSQL or MariaDB (but most of these are keeping data in several files, which you might keep on different file systems, and some of them are able to manage distributed data on remote machines.)Īfter reading this article I'm still not convinced to ever consider SQLite for anything that might require hundreds of gigabytes

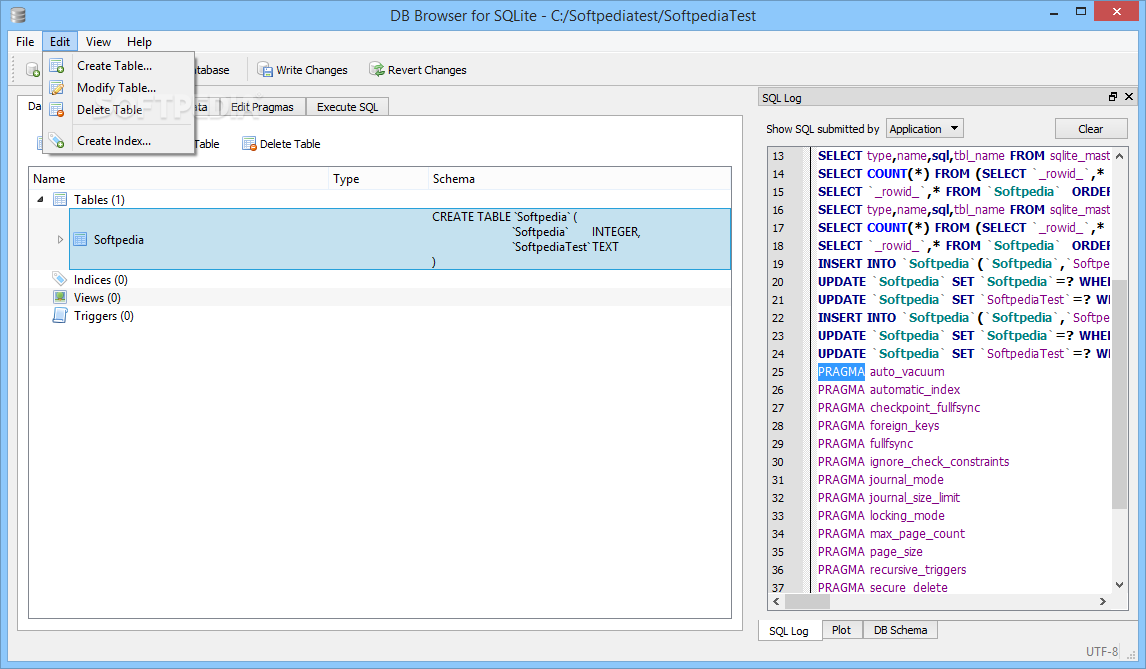

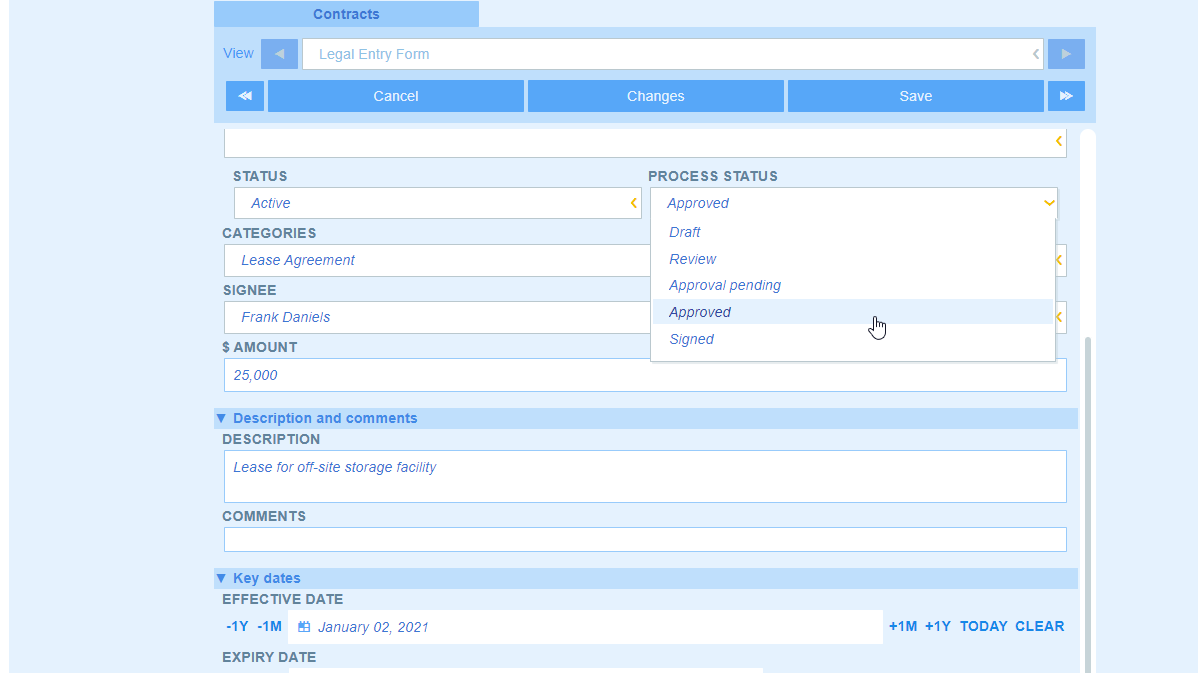

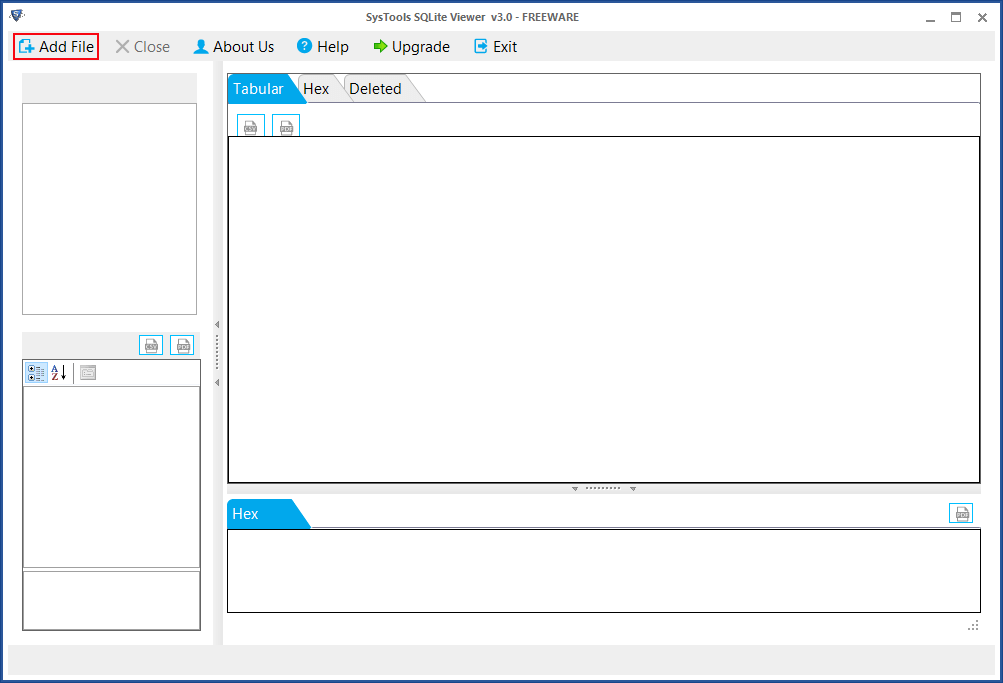

On my current Linux desktop I cannot afford much bigger than a 350Gbyte file (because as a rule of thumb I avoid having one single file eating more than half a disk partition). And that limit depends a lot of your computer & system. The realistic limit (of the size of some Sqlite database) is the same as the realistic limit for a data file. What is a realistic max size limit for a SQLite database in real-world use? But I am wondering if I have been underestimating its capabilities. I have only considered SQLite for situations where the database would be tiny dozens of megabytes at most.Īfter reading this article I'm still not convinced to ever consider SQLite for anything that might require hundreds of gigabytes. I'm not a DBA, so this is what I would consider "large" from my direct experience. Of those, at least to my knowledge, none were approaching 140TB. I have also worked indirectly with much larger databases using Oracle, Postgres, or Cassandra. In general, I agree with this, but I was surprised to learn that SQLite's maximum limit was so high! In my experience I have used quite a few SQL Server databases in the size of ~30-100GB. So if you are contemplating databases of this magnitude, you would do well to consider using a client/server database engine that spreads its content across multiple disk files, and perhaps across multiple volumes. And even if it could handle larger databases, SQLite stores the entire database in a single disk file and many filesystems limit the maximum size of files to something less than this. Now you’re good to go and create reports.According to this article on Appropriate Uses For SQLite it says that, while SQLite is limited to 140 terabytes, a client/server RDBMS may work better:Īn SQLite database is limited in size to 140 terabytes (2 47 bytes, 128 tibibytes). Select the desired tables from the list then click either loadĪfter you imported data into Power BI you need to manually create the relationships between the tables Select the DSN you created earlier from the dropdown list then click OKĬlick “Default or Custom” tab then click “Connect”.Select “ODBC” from “Get Data” then click “Connect”.Now you successfully created an ODBC DSN that can be used in Power BI to connect to SQLite. Click “Browse” and locate your SQLite database then click OK.Select appropriate driver from the list, in my case it is “SQLite3 ODBC Driver”.Click “Drivers” tab and make sure SQLite ODBC Driver(s) successfully installed.Open the correct version (x32, 圆4) of ODBC after you downloaded and installed SQLite ODBC Driver.Importing SQLite Data to Power BI Using ODBC DSN Continue reading if you’re interested to an step-by-step guide to visualise SQLite data In Power BI. After you load data to your Power BI Desktop, you create the relationships then you’re good to go and create flashy reports. You just need to use ODBC data connection and connect to a predefined “ODBC DNS” OR use a “Connection String”. Like any other Power BI cases, it’s really easy to load data from an existing SQLite database to a Power BI Desktop model. %E9%94%81%E6%98%AF%E5%90%A6%E5%8F%AF%E4%BB%A5%E4%B8%8E%E6%88%91%E7%9A%84NFS%E8%AE%BE%E7%BD%AE%E4%B8%80%E8%B5%B7%E4%BD%BF%E7%94%A8%EF%BC%9F.png)

Note: In case you just want to try this and you don’t currently have a SQLite database you can download a SQLite version of “Internet Sales” of AdventrueWorksDW2016 that I made available for you. Note: You may install both x32 and 圆4 bit versions of the driver if your other applications are in x32 bit.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed